Geek is Good Photobooth

I created an interactive display for the Geek is Good exhibition that was/is held in the Oxford Museum of the History of Science from May-September 2014.

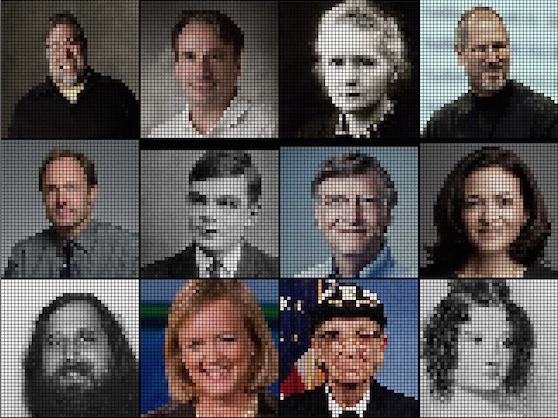

The exhibit is an interactive photo gallery that allows a visitor to snap their portrait and have it translated into a pixellated ‘geek’ portrait. The system uses a Raspberry Pi with a camera board and a giant illuminated red pushbutton.

How it Works

When the application first starts up, it loads the last few images that were captured (or if they aren’t to be found, some of famous computer scientists/entrepreneurs) and displays them on the screen. When a user presses the giant pushbutton, the screen is replaced with a preview of the view from the camera overlaid with a countdown timer. When the countdown gets to 0, the screen flashes white and the camera grabs an image. The code then searches for faces in the image, and crops it to fit. This sub-image is then pixellated and added to the gallery. The last 12 images that were captured are shown on-screen at any one time.

Software

The code is written in Python and is available on github, and makes use of pygame, pigpiod, OpenCV, and the Python Imaging Library.

Hardware

The enclosure was created by the museum staff and is a wooden box with the Raspberry Pi, Monitor and pushbutton mounted within it. The push button is connected between GPIO24 (pin 20) and ground (pin18) and is a normally open variant. The GPIO generates an interrupt when it detects that the button has been pressed, which triggers a callback within the software. The callback puts an event on the pygame event queue that causes the system to take a photo. I flush the queue regularly whilst the software’s capturing a picture so that the contact bounce in the switch and eager visitors don’t overwhelm it.